|

That would imply that there are multiple entries for squid in your config file. Not sure how that would have happened, but you can probably make a backup of your config, edit them out, and restore. This can be done with squid, a proxy server, and squidGuard. Then we need to configure the program used to rewrite the URL (you may.

Hello,

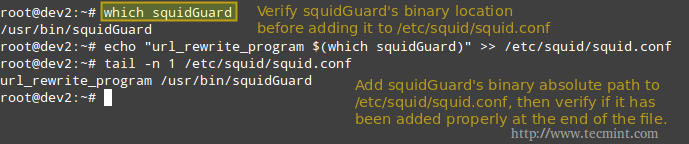

I am taking an online pen testing class and the current assignment is to implement a program (googleSearch.pl) on another machine on my network. I am running Kali on a dual boot machine with Mint. In Kali I have installed Squid 4.6 and Apache2. In squid.conf I received issues when changing http_port 3128 to 'transparent'. I left it as is, and added a port 3129 as transparent. Squid starts fine now. I added 'url_rewrite_program /root/Files/googleSearch.pl' to the EOF of squid.conf. After starting squid and apache2 (not sure yet what apache2 does) and arpspoofing target pc (port 80 to port 3129) the target machine is definiteley arpspoofed (it is a Windows 10 machine and arp -a shows duplicate physical addresses, so I think that part is fine). The .pl is supposed to append text to the end of search queries (i.e.'espn' becomes 'espn in my pants'). It does not. I am wondering if I have done something wrong or if the .pl is not working correctly. My programming experience is primarily in Java, but Perl isn't that complicated and the code makes sense to me. The changes I made to squid.conf are: uncommented 'acl localnet src 192.168.1.1/16' uncommented 'http_access allow localnet' added 'http_port 3129 transparent' left 'http_port 3128' unedited and UNcommented added 'url_rewrite_program /root/Files/googleSearch.pl' to EOF Changes to nat: iptables -t nat -A PREROUTING -i wlan1 -p tcp --dport 80 -j REDIRECT --to-port 3129 echo 1 > /proc/sys/net/ipv4/ip_forward iptables -I INPUT -p tcp --dport 3129 -j ACCEPT I am doing this wirelessly but on my own network to which the target machine is also connected. Any help would certainly be appreciated. Thank you so much. I just joined this community as I have heard it is a great place to go for linux help.

this repo holds some squid-helpers i have written such as:

dynamic content squid + ICAP caching method

this is not a squid2.7 store_url_rewrite method but ment for squid ver 3+tested on squid 3.1.19 and works on squid 3.2.17 only as forward proxy and not as tproxyintercept.what we do is manipulating the requests of two proxies in a hierarchy ordersquid1----->squid2--->youtubedynamic content site. / / /ICAP|MYSQL

squid1 gets a request from client and sends the request to ICAP server.ICAP server strips from the url the needed data then compose an url for internal use andstores the url id + original url to DB as a pair.squid1 acls prevent it to send an icap request to the icap server and uses squid2 as a cache peereither as a tproxy routerbridge or a cache direct cache_peer.(until now the client dosnt know a thing and thinks he is on the way to get the original url.squid2 gets the request from squid1 as an internal url such as 'http://youtube.squid.internal/dynamic_id_url'squid2 then sends an ICAP request to the ICAP server.the ICAP server is checking in the database if there is a url that matches the id of the dynamic content andrewrites the url to the original one.then squid2 gets the origianl rewritten url for squid1(squid1 thinks he gets 'http://youtube.squid.internal/dynamic_id_url')and the dynamic content is served toi to the client.next time someone will try to get the dynamic content he will get the cached data from squid1 if it's still in cache.

more detaild explanation and history on the process here:squid-users mailing list post on the topichow to implement and needed scripts are in /squid-helpers/youtubetwist

NEW StoreID perl helper that helps cache youtube videos.

I also have store_url_rewrite for squid2.7 that ment to help store youtube videos in cache.

there are samples for codes that other people wrote.

Caching youtube videos using this above method will not work since Google have changed their static way of publishing videos.If you want more details on the subject contact me via my private email [email protected] or via squid user mailing list at:[email protected]

proxy_hb_check

two scripts:proxyhb.sh - heartbeat checker for a http proxy status using a specific http target.proxystatcheck.sh - external acl helper for squid to retrive the status of the proxy.those scripts can be used with snmp to check the load of the proxy.will be ported into a routing HB mechanism.

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

December 2022

Categories |

RSS Feed

RSS Feed